|

比如:《孤独患者》《爱值得》

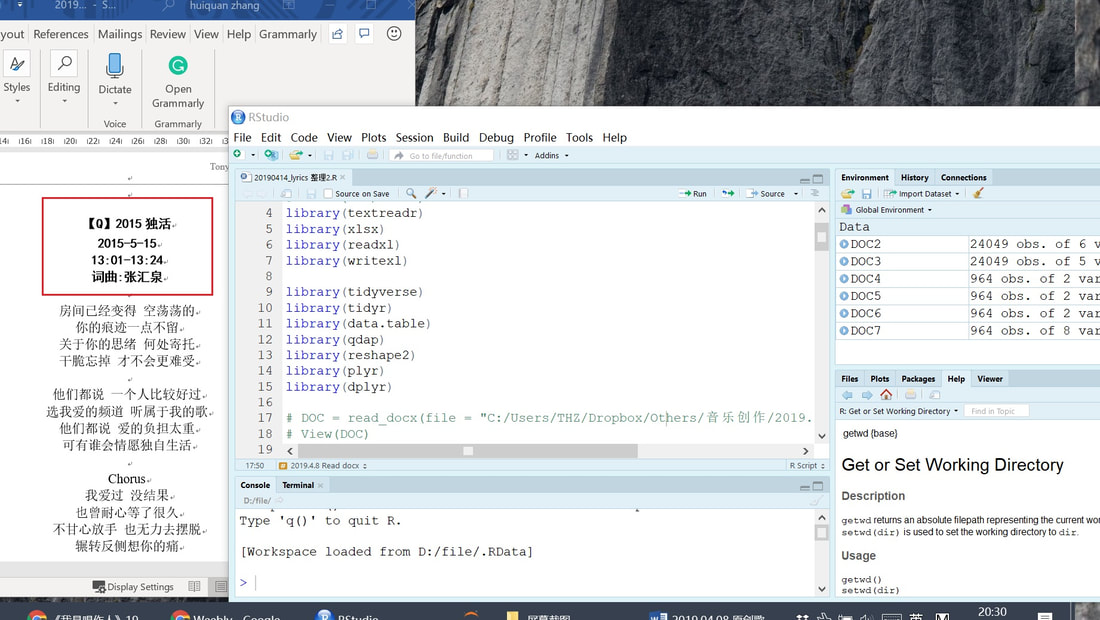

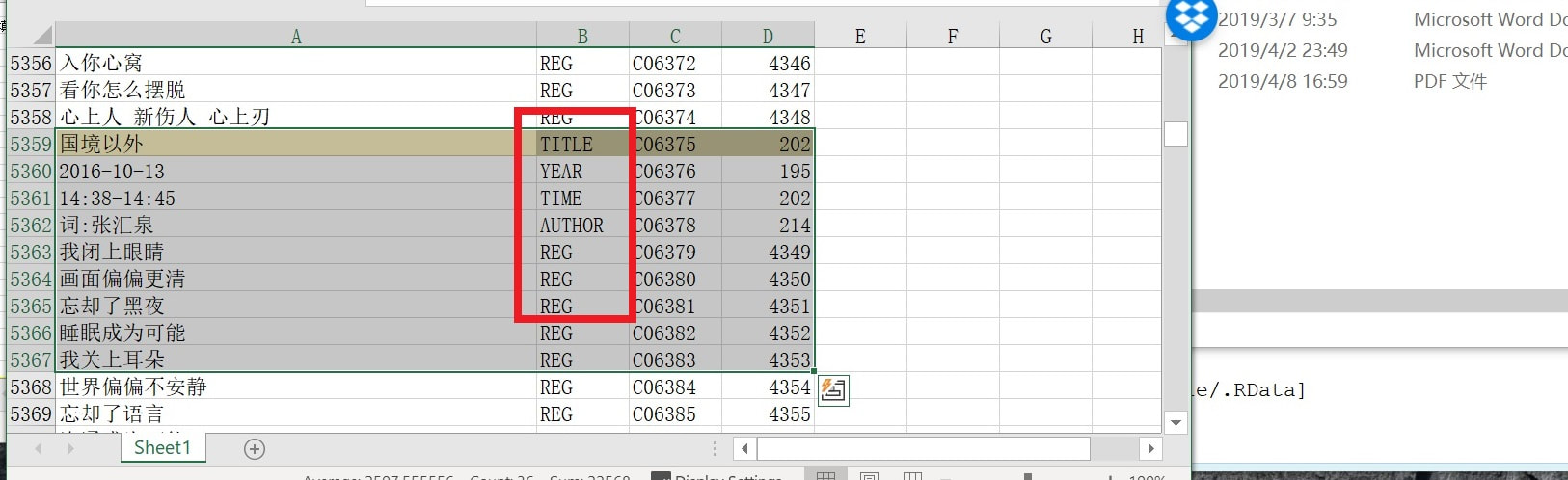

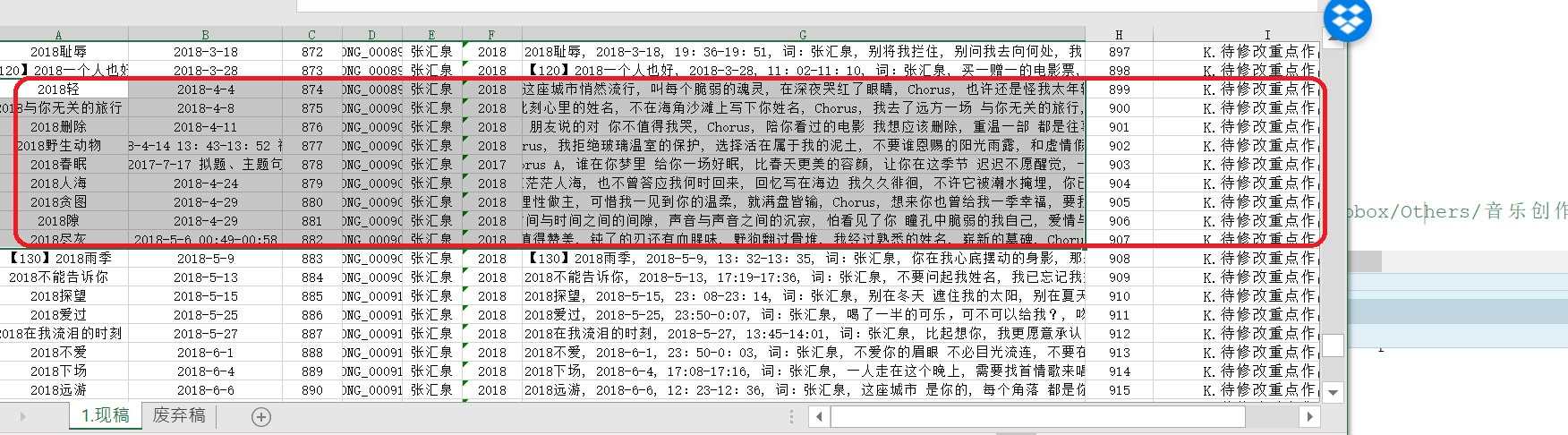

最近发现自己的歌词数逐渐接近1000,Word文档里的汉字字符数已经二十几万。一方面,继续用Word编辑似乎有些变慢(不知道这方面有什么解法?写长篇小说的朋友们都用什么比较轻量的写作软件?);另一方面,也想对自己的歌词进行一些数据分析,那么表格式数据存储(CSV,XLSX)+每首歌作为一个entry(row,observation)可能更为适合。但是对于1000首歌词+二十万字来说,手工复制粘贴、存储的劳动量,并不现实。于是想到,使用使用R语言简化工作流程。 ##################################### ############## 加载数据包 ############## ##################################### Sys.setlocale(category = "LC_ALL", locale = "US") # setwd("D:/file") library(textreadr) library(xlsx) library(readxl) library(writexl) library(tidyverse) library(tidyr) library(data.table) library(qdap) library(reshape2) library(plyr) library(dplyr) ##################################### ### read in docx file & convert to DATAFRAME ### ##################################### DOC = read_docx(file = "*PATH*/2019.04.08 原创歌词 单页.docx") # View(DOC) DOC1 = data.frame(DOC) # convert to data.frame DOC1 = as.data.table(DOC1) # VIEW DATA with head/tail functions # head(DOC1) tail(DOC1) ##################################### ## 用关键词检测grepl, 对半结构化文本进行识别 ### ##################################### DOC1$ATTR = NA # create empty variable DOC1$ATTR = ifelse(grepl("chorus", as.character(DOC1$DOC), ignore.case = T), "CHORUS", "REG") # 副歌部分——行识别 # DOC1$ATTR = ifelse(grepl("200", as.character(DOC1$DOC), ignore.case = T), "YEAR", DOC1$ATTR) # 年份——行识别 # DOC1$ATTR = ifelse(grepl("201", as.character(DOC1$DOC), ignore.case = T), "YEAR", DOC1$ATTR) # 年份——行识别 # DOC1$ATTR = ifelse(grepl(":", as.character(DOC1$DOC), ignore.case = T), "TIME", DOC1$ATTR) # 冒号/时间——行识别 # DOC1$ATTR = ifelse(grepl("张汇泉", as.character(DOC1$DOC), ignore.case = T), "AUTHOR", DOC1$ATTR) # 作词人——行识别 # ##################################### ######## 检查歌曲属性、是否识别错误 ######## ##################################### # 检查 # dist_tab(DOC1$ATTR) DOC1$ATTR[10273] # 检查特定行数 # 对于所有行——年份行数之前的一行——识别为歌曲标题 # # 很可能出现大量误差,但1200余行YEAR,989首歌词,# # 误差量大约二百行 doable # for (n in 1:25068) { DOC1$ATTR[n-1][DOC1$ATTR[n] == "YEAR"]="TITLE" } write.xlsx2(DOC1, "D:/1.xlsx") ## 手工调整:把所有TITLE行,漏掉的(约六十行)######### ## 误认的(约二百行)在EXCEL中手工调整、识别、检查 ##### ## 使用筛选、排序、查找、替换等功能。 ################ # 可以看到,上图:只要没有特别例外的状况,大多数歌词的区块属性都被准确识别了——标题行(TITLE)、年份行(YEAR)、时间行(TIME)、作者行(AUTHOR)、内容行(REGULAR) #################################### ########## 2019.4.8 read in xlsx ########### #################################### ## 读入手工清理后的 EXCEL数据 ## DOC2 = read.xlsx2("*PATH*/20190408_989SONGS.xlsx", 1) ## 所有TITLE标题之间的行数,都标定为同一个BLOCK. ## DOC2$TITLE_COUNT = sapply(1:length(DOC2$ATTR), function(i) sum(DOC2$ATTR[1:i]== "TITLE")) ## DOC2$NAME = NA DOC2$NAME = DOC2$NAME[match(DOC2$TITLE_COUNT, DOC2$TITLE_COUNT)] writexl::write_xlsx(DOC2, "D:/989songs.xlsx") as.character(DOC2$DOC [DOC2$ATTR=="TITLE"]) ########################################## ###### 2019.4.14 redo_title, long to wide data: ######## ########################################## # 这一步是把20000行歌词LONG to WIDE(长变宽),按照标题分组, # 整合成989首歌词。 # 使用到plyr函数包中的ddply函数,把所有“TITLE_COUNT”相同(也就是从属于同一个歌词BLOCK区块)的文本内容,贴合在一起,成为歌词; # 把第2段(一般来说是年份)、第3段(一般来说是写作时间)都提取出来。 # DOC3 = read.xlsx2("D:/994SONGS.xlsx", 1) DOC3 = readxl::read_xlsx("D:/994SONGS.xlsx", 1) DOC3$TITLE_COUNT = sapply(1:length(DOC3$ATTR), function(i) sum(DOC3$ATTR[1:i]== "TITLE")) DOC4 = plyr::ddply(DOC3, .(TITLE_COUNT), summarize, TXT = toString(DOC)) DOC5 = plyr::ddply(DOC3, .(TITLE_COUNT), summarize, TXT = toString(DOC[1])) DOC6 = plyr::ddply(DOC3, .(TITLE_COUNT), summarize, TXT = toString(DOC[2])) DOC7 = Reduce(function(x,y) merge(x = x, y = y, by = "TITLE_COUNT"), list(DOC4, DOC5, DOC6)) head(DOC7) DOC7$ID = seq(1, length(DOC7$TITLE_COUNT), 1) DOC7$RID = formatC(DOC7$ID, width = 6, flag = 0) DOC7$RID = paste0("SONG_", DOC7$RID) head(DOC7) names(DOC7) names(DOC7) = c("SERIAL", "LYRICS", "TITLE", "TIME", "ID", "RID") DOC7$LYRICS_BY = "张汇泉" DOC7$YEAR = substr(DOC7$TIME, 1, 4) write_xlsx(DOC7, "D:/20190413_lyrics.xlsx") # 结果见下图:成功转换(当然需要一些手动修改和纠错)# # Calculate distance in kilometers between two points

earth.dist <- function (long1, lat1, long2, lat2) { rad <- pi/180 a1 <- lat1 * rad a2 <- long1 * rad b1 <- lat2 * rad b2 <- long2 * rad dlon <- b2 - a2 dlat <- b1 - a1 a <- (sin(dlat/2))^2 + cos(a1) * cos(b1) * (sin(dlon/2))^2 c <- 2 * atan2(sqrt(a), sqrt(1 - a)) R <- 6378.145 d <- R * c return(d) } earth.dist(116, 40, 121, 31) # DISTANCE BETWEEN BEIJING AND SHANGHAI # [1] 1099.08 links <- "某网站链接"

# 自定义抓取函数 GrabWebpage <- function(d) { web = read_html(d, encoding="UTF8") # 读取数据,规定编码 web1 = web %>% html_nodes("a") %>% html_attr("class") num_where1 = match("blog-pager-older-link", web1) # 下一篇文章的网址链接 web1 = web %>% html_nodes("a") %>% html_attr("href") web1 = web1 [num_where1] # 下一篇文章的网址链接 # web2 = web %>% html_nodes("h2") %>% html_attr("class") num_where2 = match("date-header", web2) # 本篇文章的发布日期 # num_where2 web2 = web %>% html_nodes("h2") %>% html_text() web2 = web2 [num_where2] # 本篇文章的发布日期 # web3 = web %>% html_nodes("h3") %>% html_attr("class") num_where3 = match("post-title entry-title", web3) # 本篇文章的标题 web3 = web %>% html_nodes("h3") %>% html_text() web3 = web3 [num_where3] # 本篇文章的标题 # web4 = web %>% html_nodes("div") %>% html_text() web5 = web %>% html_nodes("div") %>% html_attr("class") num_where4 = match("post-body entry-content", web5) # 本篇文章的正文 web4 = paste(web4 [num_where4]) # 本篇文章的正文 Date = as.character(web2) Title = as.character(web3) Post = paste(as.character(web4), collapse=",,") DF = cbind(Title, Date, d, Post, web1) DF = data.frame(DF) write.csv(DF, paste("D:/",gsub(pattern="\n", replacement="", substr(as.character(DF$Title), start=1, stop=8)), ".csv", sep ="")) # 注意这里要把换行符号\n替换掉。 Sys.sleep(0.5) assign (web3, DF, envir = .GlobalEnv) } GrabWebpage(links) case = 1 # 使用while循环。 while(case < 1000) { # while-loop當符合裡面的條件時,就會一直重複括號內的程式碼,直到不符合為止 DF = GrabWebpage(links) links = as.character(DF$web1) case = case + 1 } Code

########## 20171011 Use State, Year to create a map. ################## # geocode("RUC") library(ggmap) library(ggplot2) library(fiftystater) # data("fifty_states") # this line is optional due to lazy data loading map = fifty_states rm(list=setdiff(ls(), c("map", "event"))) # event$STATEid = NA event = within(event,{ STATEid [ STATE=="AL"]="alabama"#1 STATEid [ STATE=="AK"]="alaska"#2 STATEid [ STATE=="AZ"]="arizona"#3 STATEid [ STATE=="AR"]="arkansas"#4 STATEid [ STATE=="CA"]="california"#5 STATEid [ STATE=="CO"]="colorado"#6 STATEid [ STATE=="CT"]="connecticut"#7 STATEid [ STATE=="DE"]="delaware"#8 STATEid [ STATE=="DC"]="district of columbia"#9 STATEid [ STATE=="FL"]="florida"#10 STATEid [ STATE=="GA"]="georgia"#11 STATEid [ STATE=="HI"]="hawaii"#12 STATEid [ STATE=="ID"]="idaho"#13 STATEid [ STATE=="IL"]="illinois"#14 STATEid [ STATE=="IN"]="indiana"#15 STATEid [ STATE=="IA"]="iowa"#16 STATEid [ STATE=="KS"]="kansas"#17 STATEid [ STATE=="KY"]="kentucky"#18 STATEid [ STATE=="LA"]="louisiana"#19 STATEid [ STATE=="ME"]="maine"#20 STATEid [ STATE=="MD"]="maryland"#21 STATEid [ STATE=="MA"]="massachusetts"#22 STATEid [ STATE=="MI"]="michigan"#23 STATEid [ STATE=="MN"]="minnesota"#24 STATEid [ STATE=="MS"]="mississippi"#25 STATEid [ STATE=="MO"]="missouri"#26 STATEid [ STATE=="MT"]="montana"#27 STATEid [ STATE=="NE"]="nebraska"#28 STATEid [ STATE=="NV"]="nevada"#29 STATEid [ STATE=="NH"]="new hampshire"#30 STATEid [ STATE=="NJ"]="new jersey"#31 STATEid [ STATE=="NM"]="new mexico"#32 STATEid [ STATE=="NY"]="new york"#33 STATEid [ STATE=="NC"]="north carolina"#34 STATEid [ STATE=="ND"]="north dakota"#35 STATEid [ STATE=="OH"]="ohio"#36 STATEid [ STATE=="OK"]="oklahoma"#37 STATEid [ STATE=="OR"]="oregon"#38 STATEid [ STATE=="PA"]="pennsylvania"#39 STATEid [ STATE=="RI"]="rhode island"#40 STATEid [ STATE=="SC"]="south carolina"#41 STATEid [ STATE=="SD"]="south dakota"#42 STATEid [ STATE=="TN"]="tennessee"#43 STATEid [ STATE=="TX"]="texas"#44 STATEid [ STATE=="UT"]="utah"#45 STATEid [ STATE=="VT"]="vermont"#46 STATEid [ STATE=="VA"]="virginia"#47 STATEid [ STATE=="WA"]="washington"#48 STATEid [ STATE=="WV"]="west virginia"#49 STATEid [ STATE=="WI"]="wisconsin"#50 STATEid [ STATE=="WY"]="wyoming"#51 }) # event$STATE = as.factor(event$STATE) event$STATEid = as.factor(event$STATEid) # map$id = as.factor(map$id) p = ggplot() + geom_map(data=map, map=map, aes(x=long, y=lat, map_id=id, group=group), # note here. id has to be equal with id; group can change fill="#ffffff", color="black")+ expand_limits(x = fifty_states$long, y = fifty_states$lat) + coord_map() + #scale_x_continuous(breaks = NULL) + #scale_y_continuous(breaks = NULL) + labs(x = "LONGITUDE", y = "LATITUDE") p ########## violence################### library(qdap) dist_tab(event$viold) event$viold = as.numeric(as.character(event$viold)) # ggplot(event,aes(factor(year),fill=factor(viold))) + geom_bar() # ggplot(event,aes(x=year, y=viold)) + geom_bar(stat = "summary", fun.y="mean") # ggplot(event,aes(x=year, y=CASE)) + geom_bar(stat = "summary", fun.y="sum") event_state = aggregate(event$viold, by=list(STATE=event$STATEid, YEAR =event$year), FUN=sum) # event_state1960 = event_state [event_state$YEAR==1960,] # event_state1960 = na.omit(event_state1960) # event_state1960 = merge(event_state1960, map1, by=c("STATE"), all=F) cnames = aggregate(cbind(long, lat) ~ id, data = map, FUN = function(x) mean(range(x))) cnames$angle = 0 # data = merge(event_state, cnames, by.x="STATE", by.y="id", all.x=T) data$X=data$x+1 data$radius= log(data$X) library(scatterpie) windows() ggplot() + geom_map(data=map, map=map, aes(x=long, y=lat, map_id=id, group=group), # note here. id has to be equal with id; group can change fill="#ffffff", color="black")+ geom_text(data=data, aes(long, lat, label = STATE), size=3, color="black")+ geom_scatterpie(data=data [data$YEAR=="1963",], aes(r=radius, x=long, y=lat), col=c("X")) ggplot() + geom_map(data=map, map=map, aes(x=long, y=lat, map_id=id, group=group), # note here. id has to be equal with id; group can change fill="#ffffff", color="black")+ geom_point(aes(x=LON, y=LAT, show_guide = TRUE, colour=form1), data=event[event$LON> -125 &event$LON< -55 &event$LAT<55&event$LAT>25,], alpha=.7, na.rm = T) + scale_color_gradient(low="beige", high="red")+ ggtitle("USA, 1960, Violent Cases")+ theme_grey() + theme(plot.title = element_text(hjust = 0.5)) |

|||||||||||||||